Other recent blogs

Let's talk

Reach out, we'd love to hear from you!

The rise of the data-driven enterprise has mandated intelligent data transformations to prevent leaders from feeling trapped in fragmented data silos that hinder organizational growth. Yes, this is the harsh reality - companies operating at scale today have a serious data problem, and surprisingly, it is not associated with a data shortage. The real culprit is operationally distributed data from the wrong sources, governed by the wrong people, and structured for the wrong purposes.These challenges are worsened by obsolete legacy systems and poor data management strategy.

This is where a modern data warehousing strategy helps. It allows enterprise CEOs to improve workflows and streamline human-machine interactions. By embedding centralized data into every decision and process, organizations gain a significant advantage. This shift—from predictive systems to practical AI-driven intelligence—drives continuous performance improvement and speeds up decision-making.

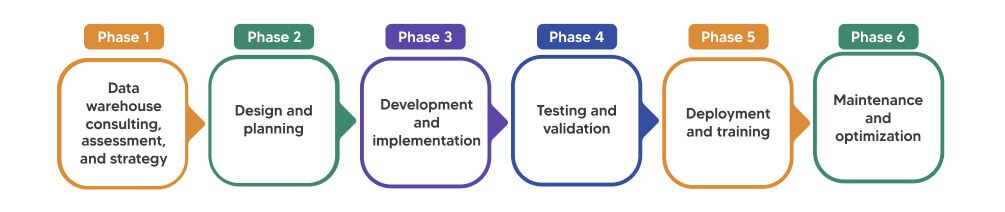

This blog explains why delaying a modern data warehousing strategy can cost more than most organizations expect. It shows where a data warehouse fits in the broader enterprise stack. You’ll also learn what a successful rollout plan looks like, from assessment to optimization. The article addresses the gap between legacy platforms and cloud-native warehouses. Finally, it breaks down the real operational value of a centralized data platform.

Why modern centralized data platform matters, and where does it fit?

A modern data warehouse is a cloud-native storage system designed to run fast analytical queries across large, structured datasets. Platforms like Snowflake, Google BigQuery, Amazon Redshift, and Azure Synapse define this category. They separate compute from storage, scale elastically, and support concurrent users without performance degradation.

In an enterprise architecture, the modern data warehouse sits between raw data sources and business intelligence tools. It ingests data from transactional systems, CRMs, ERPs, cloud applications, and third-party feeds. It structures, governs, and serves that data to dashboards, reports, and downstream ML pipelines.

Where it fits in practice: companies that have already built a data lake often use the warehouse as a curated, query-ready layer on top of it. Those still on-premises are migrating to reduce costs and increase speed. Those starting fresh are building cloud-native stacks with the warehouse at the centre.

The shift matters because the alternative, a fragmented stack of data marts, spreadsheets, and siloed reporting tools, produces inconsistent metrics, slow decision cycles, and an analytics team that spends most of its time on data wrangling rather than insight generation. According to Gartner, poor data quality costs organisations an average of $12.9 million per year and that figure goes up as the business scales.

What is the difference between traditional platforms and a modern data warehouse?

The table below compares data lakes, data marts, and data lakehouses against a modern data warehouse across key dimensions relevant to enterprise analytics decisions.

| Dimension | Data Lake | Data Mart / Lakehouse | Modern Data Warehouse |

|---|---|---|---|

| Primary Use | Raw data storage | Dept-level or hybrid analytics | Enterprise-wide analytics |

| Data Structure | Unstructured / semi-structured | Structured or mixed | Structured, governed |

| Query Performance | Slow without optimisation | Moderate | High, optimised |

| Governance | Weak by default | Partial | Strong, centralised |

| Scalability | High (storage) | Moderate | High (compute + storage) |

| BI Readiness | Requires transformation layer | Partial | Native, out-of-the-box |

| Best For | Data science, ML pipelines | Team-specific reporting | Enterprise intelligence |

Here, the key takeaway is not that other platforms have become obsolete for the data-driven enterprises. Data lakes continue to play an important role in supporting data science and machine learning workloads, and data marts can still address specific departmental requirements. However, when it comes to delivering enterprise-wide business intelligence with strong governance, consistency, and data readiness, the well-implemented modern data warehouse architecture provides a future-ready technical foundation.

What does a modern data warehouse reference architecture look like?

A production-grade modern data warehouse architecture in 2025 follows a layered, cloud-native pattern. At the ingestion layer, data pipelines built with tools like Fivetran, dbt, or Apache Airflow pull from source systems via batch or real-time streaming. Raw data lands in a staging zone, typically in cloud object storage, before transformation. The transformation layer applies business logic, deduplication, and quality checks, producing clean, modelled datasets in the warehouse itself. Above this sit semantic layers and BI tools such as Looker, Power BI, or Tableau, which connect to the warehouse via governed, role-based access.

Security and governance are not add-ons in this architecture. Column-level security, row-level access policies, data cataloguing, and lineage tracking are built in from day one. This matters for regulated industries and for any enterprise that needs audit-ready reporting. Cloud cost management is also embedded. Compute clusters are auto-suspended when idle. Queries are profiled and optimised. Storage tiering moves infrequently accessed data to lower-cost options. The result is a system that scales with the business without proportional cost increases.

Business case for Data Warehousing: How centralized data platform solve data silo challenges?

Data silos are more than an operational inconvenience—they create a structural risk for the organization. When sales, finance, operations, and product teams maintain separate data repositories, there is no unified source of truth. Critical metrics such as customer lifetime value, revenue, or churn are calculated differently across departments, leading to inconsistencies and misalignment.

As a result, leadership often spends time resolving data discrepancies rather than focusing on strategic decisions. A centralized data platform addresses this challenge by establishing a single, governed source of record for the enterprise, ensuring that every team accesses and analyzes the same consistent, reliable data.

Consider the mentioned below real-life business case for modern data warehousing scenarios from Kellton's delivery work:

1. SQL Data Warehouse to Snowflake for Scalable Performance

A global survey platform struggled with a 10+ year-old SQL Server data warehouse that could not efficiently manage ~12TB of growing data, causing performance bottlenecks and rising infrastructure costs. Kellton migrated the legacy environment to Snowflake using a metadata-driven approach and automated SQL conversion accelerators to ensure data accuracy and speed. The transformation migrated 120+ dashboards and 1,300+ database objects, improved batch processing and reporting performance, and reduced data center operational costs. The modern Snowflake architecture delivered a highly scalable analytics platform, enabling faster insights and supporting the company’s future data growth and innovation goals.

2. AWS Cloud Data Lake and Enterprise Data Warehouse Implementation

A global automation and security company faced fragmented data sources and outdated reporting systems, making it difficult for business teams to access reliable insights for partner performance, renewals, and pricing decisions. Kellton implemented an AWS-based data lake and enterprise data warehouse, integrating multiple systems including Salesforce, Oracle Fusion, and engineering datasets. Using AWS Glue, Lambda, and Python, the team built a scalable data pipeline and delivered Tableau dashboards for unified reporting. The solution standardized information delivery across departments, enabled interactive analytics, and improved decision-making with consistent, reliable data across the organization.

3. Data Warehouse Migration to AWS Cloud (Strategy & Best Practices)

Traditional on-premises data warehouses often suffer from scalability limits, high maintenance costs, and inflexible architectures, making it difficult to process growing data volumes or support modern analytics needs. Migrating to AWS enables organizations to modernize data infrastructure with elastic scalability, improved performance, and integrated analytics capabilities. Cloud services like Amazon Redshift and AWS Lake Formation allow businesses to process large datasets efficiently while reducing infrastructure costs through a pay-as-you-go model. The result is greater agility, improved data accessibility, and faster insights, helping organizations innovate quickly and make data-driven decisions at scale

Modern Data warehouse strategy rollout: The step-by-step approach

Phase 1: Data warehouse consulting, assessment, and strategy

Phase 1: Data warehouse consulting, assessment, and strategy

The journey begins with a deep discovery and consulting phase. Stakeholders across business units are engaged to understand KPIs, analytics requirements, and existing data challenges. At the same time, the current data landscape is assessed—covering data sources, volumes, formats, and system dependencies. Based on business needs and compliance considerations, an appropriate deployment model (cloud, on-premises, or hybrid) is defined. This phase concludes with a clear roadmap outlining timelines, budgets, and milestones. A thorough assessment here is critical, as weak planning often leads to architectural decisions that become costly to reverse later.

Phase 2: Design and planning

Once the strategy is defined, the focus shifts to designing the data architecture. This includes creating conceptual, logical, and physical data models that define how information will be structured and organized. The team also determines the ETL or ELT framework, storage architecture, and security protocols. Technology selection—whether for data ingestion, transformation, warehousing, or BI—should align with the data model and business objectives. Successful projects prioritize the data architecture first rather than forcing data structures to adapt to preselected tools.

Phase 3: Development and implementation

During this phase, data pipelines are built to extract, transform, and load information from various source systems into the data warehouse. Data quality controls are implemented to ensure accuracy, including deduplication, null handling, and consistent data typing. The infrastructure is configured with appropriate schemas, databases, user roles, and access policies. Collaboration between data engineers and business analysts is essential here to ensure the transformed data aligns with real business questions and reporting needs.

Phase 4: Testing and validation

Before deployment, the system undergoes extensive validation to confirm that data is accurate, complete, and consistent with source systems. User acceptance testing (UAT) is conducted with business users to verify that dashboards, reports, and analytics outputs meet expectations. Performance tuning is also performed to optimize queries and ETL workflows. UAT plays a vital role in this phase, as business users often identify edge cases and usability issues that technical testing alone may overlook.

Phase 5: Deployment and training

After successful validation, the data warehouse is formally deployed into production. Teams are trained on how to access and interpret data, use BI tools, and follow governance practices. Proper documentation is also created to explain data definitions, pipeline logic, and support procedures. Training is frequently underestimated, yet it is critical for adoption. Even the most advanced data platform cannot deliver value if users do not trust or understand how to use it.

Phase 6: Maintenance and optimization

Post-deployment, the data warehouse requires continuous monitoring and refinement. Performance metrics such as system speed, data freshness, query efficiency, and user engagement are regularly evaluated. As business needs evolve, new data sources, models, and analytics capabilities may need to be added. A modern data warehouse should be treated as a living system—one that evolves alongside the organization’s data strategy. Allocating resources for ongoing optimization ensures the platform continues delivering long-term business value.

How can Kellton support your data warehousing strategy?

Kellton delivers end-to-end data warehouse consulting and implementation, from infrastructure assessment and platform selection to migration, architecture design, and ongoing optimisation. With hands-on experience across Snowflake, AWS, Azure Synapse, and Google Cloud, Kellton helps enterprises move from fragmented data environments to scalable, central and, governed analytics platforms. If you are evaluating a migration or building a data warehousing strategy from the ground up, talk to Kellton's data engineering team.

Frequently asked questions

What are the top 5 data warehouses?

Snowflake, Google BigQuery, Amazon Redshift, Azure Synapse Analytics, and Databricks SQL are the leading modern data warehouse platforms in enterprise use as of 2025. Selection depends on cloud environment, existing tooling, query workload type, and cost structure.

What is the function of a modern data warehouse?

A modern data warehouse consolidates structured data from multiple source systems into a single, governed, query-optimised environment. It supports business intelligence, reporting, and analytics at enterprise scale, with elastic compute, strong access controls, and integrations with BI tools and ML pipelines.

What is the difference between a traditional and modern data warehouse?

Traditional warehouses are on-premise, fixed-capacity systems with high infrastructure costs and limited scalability. Modern data warehouses are cloud-native, separate compute from storage, scale on demand, and support concurrent workloads without performance degradation. They are faster to deploy and cheaper to maintain at scale.

What are the three types of data warehouses?

Enterprise data warehouses serve organisation-wide analytics. Data marts are scoped to a specific department or function. Operational data stores support near-real-time operational reporting. Modern cloud warehouses typically subsume the EDW role and can support mart-like views via semantic-layer segmentation.

How to build a data warehouse step by step?

Follow six phases: assess and define strategy, design data models and architecture, develop pipelines and build the warehouse, test and validate with end users, deploy to production with training, then monitor and optimise continuously. Engaging a data warehouse consulting partner for the first two phases significantly reduces downstream rework.