The insurance industry is standing at a transformational crossroads where Artificial Intelligence (AI) is reshaping the fundamental architecture of claims processing. The state of AI transformation in the insurance sector is no surprise, with McKinsey's estimation long back that advanced AI technologies could reach $1.1 trillion in annual value for the global insurance industry. Interestingly, insurance claim management is a crucial function, and AI, as a driving force, successfully helps businesses to

However, realizing these benefits requires more than just implementing AI tools—it demands a strategic overhaul of the underlying data infrastructure that powers modern claims operations. The complexity of modern insurance claims processing extends far beyond simple data collection and storage.

Today's insurers handle massive volumes of structured and unstructured data from multiple touchpoints, including policy documents, medical records, IoT sensors, social media interactions, and real-time fraud detection systems. Insurance fraud costs $6 billion annually, and insurers lose at least 10% of their premium collection to insurance fraud, making the need for sophisticated data platform strategies more critical than ever.

The journey toward AI-driven claims processing excellence is fraught with challenges that traditional data infrastructures simply cannot address. Legacy systems, built for a different era of insurance operations, struggle to support the real-time analytics, predictive modeling, and automated decision-making that modern AI applications demand. Claims processing in 2030 remains a primary function of carriers, but more than half of claims activities have been replaced by automation, highlighting the urgent need for insurers to reimagine their data platform strategies.

Insurance data platform strategies serve as the backbone that enables AI-driven transformation, providing the architectural foundation necessary to harness the full potential of artificial intelligence in claims processing. Without a robust, scalable, and intelligent data platform, even the most sophisticated AI algorithms become ineffective, limited by data silos, processing bottlenecks, and integration challenges that prevent seamless information flow across the claims ecosystem.

Why traditional Data Infrastructures are failing?

Traditional data warehouses, that are designed decades ago for batch processing and periodic reporting, fundamentally misalign with the dynamic requirements of modern AI-driven claims processing. These systems were architected when insurance claims involved primarily paper-based documentation and manual review processes, operating under the assumption that data analysis could occur in scheduled, predictable intervals.

The reason - the traditional insurance landscape has evolved dramatically, with claims now involving real-time data streams from telematics devices, instant photo uploads from mobile applications, continuous fraud monitoring systems, and immediate customer communication channels. Traditional data warehouse architectures cannot accommodate the velocity, variety, and volume of data that modern insurance operations generate and require for effective AI implementation.

Furthermore, traditional systems often operate in isolation, creating data silos that prevent the comprehensive view necessary for effective AI-driven claims processing. Policy data resides in one system, claims history in another, customer interaction data in a third, and fraud indicators in yet another platform. This fragmented approach makes it impossible to develop the holistic understanding that AI algorithms require to make accurate predictions and automated decisions.

Additionally, legacy systems suffer from fundamental architectural limitations that make real-time processing nearly impossible. Built around Extract, Transform, Load (ETL) processes that operate on scheduled intervals, these systems introduce significant delays between data generation and data availability for analysis. In an era where the percentage of claims being handled virtually and digitally skyrocketed to as high as 55% at one personal lines insurer, these delays create competitive disadvantages and customer dissatisfaction.

The batch processing nature of traditional systems means that fraud detection occurs after the fact rather than during the claims submission process, customer service representatives lack access to real-time claim status updates, and adjusters cannot leverage immediate data insights during field assessments. These limitations not only impact operational efficiency but also create opportunities for fraud and errors that AI-powered real-time systems could prevent.

Moreover, traditional data infrastructures often require extensive manual intervention for data quality management, exception handling, and system maintenance. This manual overhead consumes valuable resources that could be redirected toward strategic AI initiatives while simultaneously introducing human error and processing delays that modern automated systems can eliminate.

Moreover, traditional data infrastructures often require extensive manual intervention for data quality management, exception handling, and system maintenance. This manual overhead consumes valuable resources that could be redirected toward strategic AI initiatives while simultaneously introducing human error and processing delays that modern automated systems can eliminate.

Exploring the role of Data Analytics in AI Insurance Claim Processing

Modern data analytics serves as the foundational layer that enables AI-powered transformation in insurance claims processing. These sophisticated platforms go beyond traditional data management to deliver intelligent insights, automated pattern recognition, and predictive capabilities that fundamentally reshape how insurers handle claims from submission to settlement.

Intelligent data management and pattern recognition

Data analytics tools excel at organizing vast amounts of disparate data while automatically identifying and eliminating duplicate entries. McKinsey reports that claims analytics can reduce claim processing times by up to 40%, primarily through advanced pattern recognition capabilities that identify subtle correlations between seemingly unrelated variables.

These platforms seamlessly integrate information from multiple sources, converting unstructured data such as photos, videos, and documents into analyzable formats that AI algorithms can process effectively. Advanced metadata management ensures proper data context and lineage for accurate AI model training.

Comprehensive process analysis and optimization

Data analytics platforms provide unprecedented visibility into claims processing workflows, mapping the entire journey from initial notification through final settlement. Through process mining and performance analytics, these tools identify bottlenecks and improvement opportunities where AI automation can deliver maximum impact.

Performance metrics enable data-driven decisions about process modifications, staff training, and technology investments, creating the analytical foundation necessary for accurately measuring AI-driven improvements.

Advanced fraud detection and risk assessment

Modern analytics platforms represent the frontline defense against insurance fraud, which costs the industry up to $170 billion in potential premium losses annually. These systems maintain comprehensive logs of claim activities, automatically flagging anomalies and suspicious patterns for investigation.

AI pattern recognition can analyze vehicle damage data and detect manipulated images, while machine learning algorithms continuously evolve to identify new fraud schemes and coordinated attacks across multiple claims and policyholders.

Predictive analytics for strategic planning

Data analytics platforms excel in trend identification and predictive modeling, enabling insurers to anticipate claim volumes, severity patterns, and associated costs. Accenture studies show AI can reduce claims processing time from weeks to just a few days through sophisticated forecasting capabilities. These predictive insights enable more accurate reserving, improved cash flow management, and better customer communication regarding claim expectations and settlement timelines.

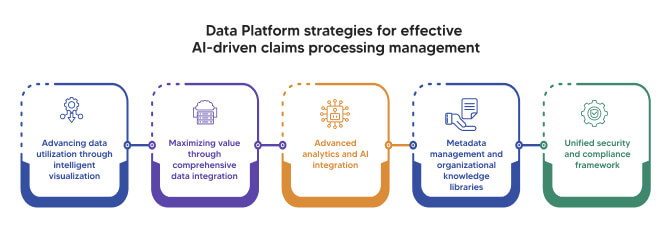

Data Platform strategies for effective AI-driven claims processing management

Advancing data utilization through intelligent visualization

Advanced data utilization requires sophisticated tools that transform complex insurance data into actionable insights through intelligent visualization platforms. These systems process vast amounts of information from multiple sources and present it in formats that enable rapid decision-making for both human analysts and AI algorithms.

Dashboard technology provides unified views of critical performance indicators, claims metrics, and analytical results with AI-powered personalization based on user roles and priorities. Claims adjusters access real-time case information and fraud risk assessments, while managers monitor portfolio performance and resource utilization patterns, enabling better decision-making in claim settlement processes through comprehensive information presentation.

Maximizing value through comprehensive data integration

Data integration serves as the foundation for successful AI-driven claims processing by consolidating real-time data streams from policy systems, customer platforms, IoT devices, and external providers. McKinsey research shows that claims analytics can reduce claim processing times by up to 40% through effective integration strategies that eliminate manual reconciliation processes.

Comprehensive integration streamlines operations by providing AI algorithms with complete, consistent information while eliminating data duplication and ensuring information consistency across underwriting, claims, and customer service functions. This seamless information flow creates the foundation for accurate forecasting and enables sophisticated predictive models that consider multiple variables simultaneously.

Advanced analytics and AI integration

The evolution from traditional statistical analysis to advanced analytics represents a fundamental shift in claims processing capabilities. Deloitte studies indicate that AI-driven underwriting processes can decrease costs by up to 50%, while AI systems augment statistical models with sophisticated pattern recognition that predicts claim severity and identifies abnormal patterns.

Machine learning algorithms trained on comprehensive datasets can eliminate human bias while ensuring consistent application of policy terms and claims procedures. The continuous learning nature of AI systems means that processing accuracy and efficiency improve over time, creating sustainable competitive advantages for insurers investing in advanced analytics platforms.

Metadata management and organizational knowledge libraries

Comprehensive metadata management enables deeper insights and contextual understanding that amplifies AI effectiveness throughout the organization. Recent surveys show that 80% of insurers are either already using AI or planning implementation within the next year, making robust metadata libraries essential for rapid AI deployment across different claim categories.

Metadata libraries cataloging claim types, policy terms, and business rules create organizational knowledge repositories accessible by both human users and AI systems. This indexing capability enables teams to apply AI models consistently across different claim categories while maintaining appropriate customization, ensuring institutional knowledge remains accessible as personnel change over time.

Unified security and compliance framework

Unified security models ensure sensitive information protection throughout the entire claims processing lifecycle while addressing complex data privacy regulations like GDPR and CCPA. Deloitte research emphasizes the importance of AI-powered fraud detection systems that can identify multibillion-dollar drains on consumers while maintaining comprehensive security frameworks.

Advanced threat detection capabilities leverage AI technologies to identify suspicious activities and potential data breaches before they impact operations. Machine learning algorithms establish baseline patterns for normal data access, automatically flagging anomalies that warrant investigation while providing the detailed audit logs necessary for compliance reporting and incident response.

AI Claims Management: Transforming traditional processes for automated decision-making using agentic AI

The integration of artificial intelligence (AI) into insurance claims processing fundamentally reshapes operational paradigms, moving beyond traditional automation to enable data-driven, scalable, and adaptive systems. Aviva's 2024 investor report highlighted £60 million ($82 million) in savings within its motor claims division, underscoring the financial impact of sophisticated AI implementations. This transformation leverages advanced computational techniques to optimize the entire claims lifecycle while enabling continuous learning and scalability to handle fluctuating claim volumes without proportional cost increases. Let’s delve deeper into this and understand how Agentic AI is reshaping the traditional claim processing dynamics.

Ready to transform your insurance operations with Agentic AI? Contact our experts today to begin your strategic implementation journey.

Read BlogTechnical Architecture of AI-Driven Claims Processing

AI-powered claims processing integrates multiple advanced technologies into a cohesive system. The core components of a AI-centered smart technical architecture are:

- Natural Language Processing (NLP): This technique utilizes transformer-based models (e.g., BERT, RoBERTa) to extract and contextualize unstructured data from claims documents, such as policyholder submissions and adjuster reports, achieving high accuracy in intent recognition and entity extraction.

- Computer Vision: Employs convolutional neural networks (CNNs) and object detection frameworks (e.g., YOLOv8, Faster R-CNN) for automated damage assessment from images and videos, enabling precise identification of damage scope and severity.

- Predictive Modeling: Leverages gradient boosting machines (e.g., XGBoost, LightGBM) and deep learning models for fraud detection, analyzing patterns in historical and real-time data to flag anomalies with over 90% precision in high-risk cases.

- Machine Learning for Settlement Optimization: Applies reinforcement learning and decision-tree-based algorithms to recommend settlement amounts, balancing speed, fairness, and cost efficiency while adhering to regulatory constraints.

These components form an integrated ecosystem that continuously improves through feedback loops, reducing operational costs and enhancing decision-making accuracy.

Strategic Implementation Roadmap

Phase 1: Foundation Building (Months 1-6)

Step 1: Data Infrastructure Assessment

- Audit and Integration: Conduct a comprehensive audit of existing data sources (e.g., CRM, ERP, claims databases), evaluating data quality, schema consistency, and integration capabilities using tools like Apache Kafka for real-time data streaming.

- Silo Mitigation: Identify and address data silos by implementing ETL (Extract, Transform, Load) pipelines, utilizing frameworks like Apache Airflow for orchestration.

- Governance and Security: Establish data governance frameworks compliant with GDPR, CCPA, and insurance-specific regulations (e.g., NAIC guidelines). Implement metadata management with tools like Apache Atlas and secure data with encryption standards (e.g., AES-256).

- Technical Deliverables:

Data quality reports with metrics (completeness, accuracy, consistency).

Unified data schema and integration roadmap.

Step 2: Technology Stack Planning

- Cloud Platform Selection: Choose scalable cloud platforms (e.g., AWS, Azure, Google Cloud) with support for distributed computing and AI workloads. Prioritize Kubernetes-based architectures for containerized deployments.

- AI/ML Tools: Select frameworks like TensorFlow, PyTorch, or Scikit-learn for model development, and platforms like Databricks for unified analytics.

- Security Framework: Design a zero-trust security model with role-based access control (RBAC) and compliance with SOC 2 and ISO 27001 standards.

- Legacy Integration: Plan API-based integration for legacy systems using RESTful or GraphQL interfaces, ensuring backward compatibility.

Step 3: Pilot Program Development

- Pilot Scope: Select high-volume, low-complexity claim types (e.g., minor auto claims) for initial deployment, minimizing risk while testing scalability.

- Metrics and KPIs: Define success metrics, including processing time reduction (target: 50% decrease), accuracy (target: 95% for automated decisions), and cost savings (target: 20-30%).

- Change Management: Develop training programs using e-learning platforms and establish feedback loops with tools like Jira for iterative improvements.

- Technical Deliverables:

Pilot deployment pipeline using CI/CD tools (e.g., Jenkins, GitLab CI).

Monitoring dashboards with Prometheus and Grafana for real-time performance tracking.

Phase 2: Core Implementation (Months 6-18)

Step 4: Data Platform Deployment

- Unified Platform: Deploy a data lakehouse architecture (e.g., Delta Lake, Snowflake) to support structured and unstructured data, enabling real-time processing with Apache Spark.

- Analytics Tools: Implement advanced analytics with tools like Tableau or Power BI for visualization and Apache Flink for stream processing.

- Metadata Management: Create comprehensive metadata libraries using tools like Collibra to ensure data traceability and compliance.

- Technical Deliverables:

Scalable data ingestion pipelines with fault tolerance.

Real-time analytics dashboards for claims processing insights.

Step 5: AI Model Development

- Model Training: Train ML models on historical claims data using distributed computing frameworks (e.g., Ray, Dask). Fine-tune NLP models for document processing and CNNs for image analysis.

- Fraud Detection: Deploy ensemble models combining XGBoost and LSTM networks for anomaly detection, achieving 90%+ recall on fraudulent claims.

- Automated Decision-Making: Implement decision-support systems using rule-based engines (e.g., Drools) integrated with ML predictions for automated approvals.

- Predictive Analytics: Develop forecasting models for claim volume and severity using time-series analysis (e.g., Prophet, ARIMA).

- Technical Deliverables:

Trained ML models with documented performance metrics (e.g., ROC-AUC, F1-score).

API endpoints for model inference using FastAPI or Flask.

Step 6: Process Automation

- Straight-Through Processing (STP): Automate routine claims with robotic process automation (RPA) tools like UiPath, achieving 80%+ STP rates for low-complexity claims.

- Intelligent Document Processing: Deploy OCR and NLP pipelines using Tesseract and SpaCy for document extraction and classification.

- Chatbots and Virtual Assistants: Implement conversational AI with frameworks like Rasa or Dialogflow, supporting 24/7 customer interaction.

- Workflow Management: Automate workflows with BPMN-compliant tools like Camunda, ensuring seamless handoffs between automated and human processes.

- Technical Deliverables:

Automated workflow scripts and configurations.

Chatbot deployment with NLU performance metrics (e.g., intent accuracy > 90%).

Phase 3: Advanced Optimization (Months 18-36)

Step 7: Ecosystem Integration

- External Data Integration: Connect with third-party data providers (e.g., weather APIs, telematics) using secure API gateways like Apigee.

- IoT Integration: Incorporate IoT sensor data (e.g., vehicle telemetry) using MQTT or Kafka for real-time monitoring.

- Mobile Apps: Develop AI-powered mobile apps with React Native, integrating computer vision for on-device claim submission.

- Regulatory Automation: Implement compliance monitoring with RegTech solutions, ensuring adherence to evolving regulations.

- Technical Deliverables:

API integration scripts with OAuth 2.0 authentication.

Mobile app source code with embedded AI models.

Step 8: Predictive Capabilities

- Claim Prevention: Deploy predictive models using Bayesian networks to identify high-risk policies and recommend preventive measures.

- Real-Time Risk Assessment: Implement streaming analytics with Apache Kafka Streams for dynamic risk scoring.

- Proactive Communication: Develop automated notification systems using Twilio or SendGrid for real-time customer updates.

- Ecosystem Integration: Enable cross-platform AI workflows with standardized protocols (e.g., OpenAPI).

- Technical Deliverables:

Predictive model APIs with documented endpoints.

Real-time risk assessment pipelines with sub-second latency.

Step 9: Continuous Evolution

- Performance Monitoring: Use MLflow for model tracking and Prometheus for system performance monitoring, targeting 99.9% uptime.

- Model Refinement: Implement online learning techniques to update models with new data, maintaining accuracy above 95%.

- Complex Claims Automation: Extend automation to high-value claims using hybrid human-AI workflows.

- Emerging Technologies: Prepare for integration of augmented reality (e.g., AR-based damage assessment) and blockchain (e.g., smart contracts for claims settlement).

- Technical Deliverables:

Model retraining scripts with automated validation.

Proof-of-concept implementations for AR and blockchain.

Success Metrics and Expected Outcomes

Immediate Benefits (6-12 Months)

- Cost Reduction: 20-40% decrease in processing costs through automation and optimized resource allocation.

- Processing Speed: 50-75% reduction in claim resolution times, achieving sub-hour processing for routine claims.

- Decision Accuracy: 95% accuracy in automated claim decisions, validated through A/B testing.

- Customer Satisfaction: 30% improvement in Net Promoter Score (NPS) due to faster, transparent processes.

Long-Term Transformation (24-36 Months)

- Predictive Prevention: 15-20% reduction in claim frequency through proactive risk mitigation.

- Ecosystem Integration: Seamless connectivity with external stakeholders, reducing data exchange latency by 80%.

- Proactive Risk Management: Real-time risk scoring with 90%+ accuracy, enabling dynamic pricing adjustments.

- Customer Experience: Industry-leading CSAT scores (>85%) through personalized, AI-driven interactions.

Conclusion

The transition to AI-driven claims processing demands a robust technical foundation, strategic implementation, and continuous evolution. By leveraging advanced AI techniques, scalable cloud architectures, and real-time data processing, insurers can achieve significant operational efficiencies while positioning themselves for future innovations.

The path forward requires a fundamental shift from viewing data as a byproduct of operations to recognizing it as the strategic foundation that enables AI excellence. Organizations that invest in unified data platforms, advanced analytics capabilities, intelligent security frameworks, and comprehensive metadata management will not only survive the digital transformation but will emerge as industry leaders.

The window for competitive advantage remains open, but it is narrowing rapidly. Insurers who begin their AI-driven transformation journey today—starting with robust insurance data platform strategies—will be positioned to capitalize on emerging opportunities while delivering the efficient, accurate, and customer-centric claims processing that defines market leadership in the digital age. The question is not whether AI will transform insurance claims processing, but whether your organization will lead or follow this inevitable evolution.

Frequently Asked Questions (FAQs)

- What is an insurance data platform and why is it essential for AI claims processing?

An insurance data platform is a comprehensive infrastructure that consolidates, manages, and processes all insurance-related data from multiple sources including policy systems, claims databases, customer interactions, and external data feeds. It's essential for AI claims processing because artificial intelligence algorithms require high-quality, integrated data to make accurate predictions, detect fraud, and automate decision-making. Without a robust data platform, AI applications cannot access the comprehensive information needed for effective claims processing.

- How can AI reduce insurance claims processing costs?

AI reduces insurance claims processing costs through several mechanisms: automated claim assessment eliminates manual review for routine cases, reducing labor costs by up to 75%; real-time fraud detection prevents fraudulent payments that cost the industry over $300 billion annually; predictive analytics optimize resource allocation and identify high-risk claims early; and straight-through processing handles simple claims without human intervention, dramatically reducing processing time and associated costs.

- What are the main challenges with legacy insurance data systems?

Legacy insurance data systems face three primary challenges: they cannot handle the velocity, variety, and volume of modern data streams required for AI applications; they operate in silos that prevent the comprehensive data view necessary for effective AI algorithms; and they rely on batch processing that introduces delays incompatible with real-time AI decision-making. These limitations prevent insurers from implementing effective AI-driven claims processing solutions.

- How does data integration improve AI insurance claims processing?

Data integration improves AI insurance claims processing by providing algorithms with comprehensive, consistent information from all relevant sources. When policy data, claims history, customer interactions, and external data feeds are seamlessly integrated, AI systems can make more accurate decisions, identify patterns across multiple data sources, detect sophisticated fraud schemes, and provide consistent customer experiences. Integration also eliminates data duplication and inconsistencies that can compromise AI model accuracy.

- What security measures are needed for AI-driven insurance data platforms?

AI-driven insurance data platforms require unified security frameworks that protect sensitive information throughout the entire processing lifecycle. This includes encryption for data at rest and in motion, role-based access controls, comprehensive audit logging, real-time threat detection, and automated compliance monitoring. Security measures must address data privacy regulations like GDPR and CCPA while enabling AI algorithms to access necessary information for processing claims effectively.

- How can insurers measure the ROI of AI claims processing investments?

Insurers can measure AI claims processing ROI through multiple metrics: cost reduction in claims processing operations (typically 20-40% reduction achievable); fraud prevention savings (measured against historical fraud losses); processing time improvements (up to 75% faster resolution); customer satisfaction scores and reduced complaint volumes; and accuracy improvements in claim assessments and settlement amounts. Leading insurers report millions in annual savings from comprehensive AI implementation.