Other recent blogs

Let's talk

Reach out, we'd love to hear from you!

Let’s start with a reality most organizations are already facing: Agentic AI and Large Language Models (LLMs) have become the most crucial operational layers of modern AI systems within the organizational puzzle of operational excellence. Agentic AI enables systems to plan, decide, and execute tasks autonomously—reducing manual intervention and accelerating workflows in process-heavy environments. LLMs, on the other hand, act as the cognitive layer—powering reasoning, language understanding, and decision support across use cases like customer service, knowledge retrieval, and automation.

Together, they form a powerful combination, which is essential to building an intelligent AI system:

- Agents handle execution and orchestration

- LLMs handle intelligence and reasoning

But this combination comes with a cost curve that most organizations underestimate. While performance improves, cost scales faster than expected due to

- Output tokens cost 3x to 10x more than input tokens

- Poorly optimized systems inflate costs by 30% to70%

- Approx 60% of LLM calls in enterprise systems are redundant (due to repeated queries or lack of caching)

This is where the hidden costs of building AI start to surface. Most CEOs fail to understand that they are only optimizing for speed to launch and are dramatically missing the cost to operate. The result? Their AI-led systems, which worked perfectly in demos, have repeatedly failed to perform after launch and have become a financially unsustainable liability in production.

That’s why LLM cost optimization is no longer a backend concern for AI enterprise adoption and is now considered a core pillar of any AI Ops strategy 2026 and beyond.

Why enterprise LLM efficiency is now a boardroom metric?

At present, CEOs are leaving no stone unturned to seize the agentic AI advantage by bringing LLMs, frameworks, and emerging technology together to ride the new wave of operational excellence, flexibility, and innovation. Here is a number that should get a CIO's attention: most enterprises are significantly underestimating their actual LLM costs.

This LLM pricing analysis shows that output tokens typically cost 3 to 5 times more than input tokens, and in some models can cost upto 8 times as much, yet most cost models are still built around input pricing alone.

A typical pricing assumption might look like this: $0.15 per million tokens.

But in reality, once output tokens (which cost 3 to 10 times more) are factored in, the blended cost can rise to $1.35 per million tokens or more—creating a 9x gap between projected and actual spend.

This is not a pricing issue but an architecture issue.

In traditional software, the budget conversation is predictable: build costs, server costs, scale costs. In generative AI, a new pattern has emerged. Teams spend carefully to launch, then discover that the economics of inference were never modeled at all. The $3,000 chatbot that costs $30,000 a month to run is not a hypothetical—it reflects the hidden costs of building AI without a cost-aware architecture. This is why leading organizations prioritize specialized Generative AI Development Services that integrate financial modeling into the initial design phase.

In LLM-powered systems, costs are dynamic and often invisible because the core is enterprise-grade LLM efficiency and the building of systems that scale economically. LLM works by compounding everything quietly.

For Example:

Retry loops, over-retrieval in RAG pipelines, unrouted traffic to premium models, and ungoverned agent loops all add to the LLM API burn rate without triggering standard monitoring alerts. Here’s how:

- Automated retry loops in AI double or triple token usage

- Poor retrieval design increases input tokens unnecessarily

- Lack of LLM model routing sends all traffic to expensive models

- Missing AI cost anomaly detection delays visibility into overspending

This is why LLM cost optimization must be treated as a first-class engineering discipline, not a late-stage cost-cutting exercise. Organizations that embed efficiency early avoid accumulating AI technical debt—and gain a structural advantage in scaling AI sustainably.

Why is cost optimization for LLM deployments a strategic imperative?

Gartner has forecast that by 2026, AI services cost will become a chief competitive factor, potentially surpassing raw model performance in importance. Global generative AI spending is expected to reach $644 billion in 2025. Approximately 72% of businesses plan to increase AI budgets, and nearly 40% already spend over $250,000 annually on LLM initiatives. Yet 95% of generative AI projects fail to meet expectations, with a significant share derailed not by model quality but by uncontrolled operational costs.

The strategic imperative is this: LLM deployments that are not cost-optimized at the architecture level become liabilities at scale. A build is only cheap until the API bill arrives. When they do, the model that looked affordable in a sandbox can consume six figures annually in production, with no clear path to reduction without a full architectural rework.

Enterprise LLM efficiency is not about compromising model quality—it is about designing systems where cost is treated as a first-class engineering constraint alongside latency, reliability, and accuracy. Organizations that ignore this early accumulate AI technical debt that becomes expensive to reverse.

What is the true cost of LLM infrastructure?

Most cost models generally stop at token pricing, which is a blunder when it comes to LLM token optimization. The true cost of LLM infrastructure comprises four layers, and token costs are typically the smallest.

- Token costs are the visible layer. Output tokens are priced 3 to 8 times higher than input tokens across all major providers. In the 2026 market, the median output-to-input price ratio sits at approximately 4x. A documentation generator or agentic workflow with long outputs will burn money rapidly, even at discounted per-token rates.

- Infrastructure and integration costs are the hidden layer. Industry benchmarks indicate that infrastructure and integration costs add 20-40% to direct API spend for mature deployments. This includes API gateway and orchestration overhead, monitoring and observability tooling, error handling and retry logic, and security and compliance review.

- Architectural waste is the compounding layer. ReAct-style agentic systems, which are standard in enterprise deployments, reload context at every reasoning step, generate reasoning traces that produce no output, and trigger retry loops when extractions fail. These patterns inflate token consumption by 3 to 10 times compared to optimized architectures. A single document review workflow analyzed in production consumed approximately 250,000 tokens under a naive ReAct pattern versus 15,000 tokens under a compiled, structured execution model. That is a 16x cost difference for identical output quality.

- Operational costs are the long-term layer. Model drift requires periodic fine-tuning, which incurs fees on managed platforms. Idle GPU capacity in self-hosted deployments erodes cost advantages rapidly; a self-hosted model at 10% utilization costs 10 times as much per token as the same model at optimal utilization. Compliance audits for HIPAA, SOC2, or ISO add 5-15% to annual operational budgets.

A realistic total budget for an enterprise LLM deployment is approximately 1.7 times the base token calculation, before factoring in architectural inefficiency.

What are industry benchmarks and spending patterns for enterprise LLM in 2026?

LLM API prices dropped approximately 80% between early 2025 and early 2026. Here’s the proof - GPT-4o equivalent input pricing fell from $5.00 to $2.50 per million tokens. The cost of compute per million tokens at GPT-4 quality has fallen from $30 in 2023 to under $1 in 2026, a significant reduction.

Despite this, enterprise LLM bills are not shrinking, but they are growing. The reason is consumption growth outpacing price reduction, combined with architectural waste that was never budgeted for. A production case documented by practitioners showed a client's API bill climbing 40% month-over-month with no new users, driven entirely by a silent retry loop triggered when the model returned malformed JSON approximately 6% of the time.

Tiered model routing benchmarks indicate that routing 70% of queries to budget models, 20% to mid-tier models, and 10% to premium models can reduce the average per-query cost by 60-80% compared to routing all traffic through a single premium model. Prompt caching from major providers, including Anthropic at 10% of the base input price for cache reads and OpenAI at 50 to 90% off, can reduce input costs by 70 to 90% on the cached portion for enterprise applications with consistent system prompts.

The LLM market is valued at $5.03 billion in 2025 and is projected to reach $15.64 billion by 2029, growing at a 49.6% CAGR. Organizations that fail to optimize now will find cost reduction progressively harder as usage volumes scale.

What are the proven LLM cost optimization strategies for improved AI ops efficiency?

1. Semantic similarity caching

Approximately 80% of enterprise queries are variations of a core set of questions. Semantic caching uses vector embeddings to identify queries within a cosine similarity threshold (typically 0.95) and serves cached responses rather than re-invoking the model. This eliminates the API call entirely. With cache hit rates above 60%, it is one of the most effective levers to reduce LLM API burn rate.

2. LLM model routing

Not every query requires a premium model. Intelligent routing classifies query complexity before invoking the model and directs simple tasks to small, cost-efficient models while reserving high-capability models for complex reasoning. A typical enterprise routing split of 70% budget, 20% mid-tier, and 10% premium reduces average per-query cost by 60 to 80% with minimal quality degradation. Automated price-based routing can also shift traffic between providers when quality is equivalent. By routing based on complexity, enterprises can significantly improve enterprise LLM efficiency while maintaining output quality.

3. Token pruning for RAG

RAG pipelines are a major source of token inflation. Default configurations typically pass 4 to 8 full documents per query. Aggressive retrieval tuning, such as limiting to 2 to 3 shorter chunks and truncating irrelevant sections, can cut input tokens by more than 50% with no loss in precision. Limiting retrieval size and removing irrelevant content can cut token usage by over 50%, making it a critical component of LLM token optimization.Token pruning for RAG also involves context window management: retaining entities, decisions, and confirmations while summarizing or dropping conversational filler from chat histories.

4. Prompt optimization and output length control

Every unnecessary token in a system prompt or instruction set is a recurring cost across every single API call. Stripping greetings, repeated context, and verbose instructions from prompts reduces input tokens. Setting hard output length limits prevents the model from generating unnecessarily long responses. Together, these changes deliver 10 to 30% cost reduction with no architectural rework.

5. Automated retry loop governance

Retry logic without token awareness is one of the most common and costly architectural mistakes in enterprise LLM deployments. Automated retry loops that trigger on malformed JSON or hallucinated outputs without a hard retry limit can double or triple costs for affected request types. Uncontrolled automated retry loops in AI systems are a major hidden cost driver. Implementing retry limits, schema validation, and AI cost anomaly detection prevents runaway spending. Hard retry caps and cost anomaly detection alerts prevent uncapped retry storms from compounding.

6. Batch processing for non-realtime workloads

Real-time inference is more expensive than batch inference. Major providers offer batch API pricing at 50% of standard rates. Workloads such as summarization, data labeling, document ingestion, and code generation do not require real-time responses. Routing these to batch endpoints is an immediate, low-engineering-effort cost reduction.

How do LLM costs escalate without optimization?

The escalation follows a predictable pattern, though most teams only recognize it in retrospect.

Stage one is the prototype illusion. Prototypes handle low volumes and hide structural inefficiency. Token costs at 100 daily queries look negligible. At 100,000 daily queries, the same architectural decisions cost thousands per day.

Stage two is the 200 OK mirage. Standard monitoring tools such as Datadog and Grafana track uptime and error rates. They do not track token waste. A system with 100% uptime and 0% error rate can still be burning 40% more tokens than necessary due to retry loops, redundant context loading, or unoptimized RAG retrieval passing 4 to 8 full documents into a prompt when only a paragraph is needed. The system is "working." It is just failing expensively.

Stage three is agent amplification. Multi-agent workflows, long contexts, and circular prompting from ungoverned agents multiply spend overnight. A single runaway agent, or one intern with API access and no budget guardrails, can spike daily burn by thousands before any alert fires.

Stage four is AI technical debt. Unoptimized prompts, unversioned system instructions that balloon token counts over time, and RAG pipelines that were never tuned for retrieval precision accumulate as silent debt. Each iteration adds cost without adding a corresponding control mechanism.

Without AI cost anomaly detection that alerts on spend rather than errors, most enterprises reach stage four before they see the problem.

Comparative analysis of cost optimization strategies

| Strategy | Cost reduction range | Engineering effort | Time to impact |

|---|---|---|---|

| Semantic similarity caching | 40 to 60% | Medium | Weeks |

| LLM model routing | 60 to 80% | Medium to high | 1 to 2 months |

| Token pruning for RAG | 30 to 50% | Medium | Weeks |

| Prompt optimization | 10 to 30% | Low | Days |

| Batch processing | 50% on eligible workloads | Low | Days |

| Retry loop governance | 5 to 40% depending on error rate | Low to medium | Weeks |

| Prompt caching (provider-native) | 70 to 90% on cached input | Low | Days |

Key future trends shaping LLM Cost Optimization

- Optimizing agent workflows (beyond ReAct):

As agentic systems scale, the focus is shifting to reducing token overhead in multi-step execution. Instead of iterative reasoning loops, workflows are being structured into deterministic pipelines with fewer LLM calls, controlled context passing, and minimized intermediate token generation. - LLM Model routing by task complexity:

Systems are increasingly adopting routing layers that classify query complexity upfront. Low-complexity tasks (classification, extraction) are handled by small models, while high-reasoning tasks are escalated—optimizing both cost and latency without compromising output quality. - Semantic similarity caching and response reuse:

Rather than recomputing similar queries, systems are implementing semantic caching using embeddings to detect near-duplicate inputs. Cached responses or intermediate outputs are reused, reducing redundant inference cycles, lowering overall token consumption and stabilizing their LLM API burn rate. - Shift to domain-specific small models (SLMs):

Fine-tuned, domain-specific models are replacing general-purpose LLMs for targeted use cases. These models operate with smaller context windows, lower compute requirements, and higher precision within constrained domains like finance, healthcare, or support operations.This shift reduces dependency on large models and addresses key hidden costs of building AI, especially in high-volume workloads. - Automated prompt compression:

Prompt optimization is becoming system-driven. Techniques like token pruning for RAG are becoming automated within pipelines. Systems are evolving to pass only high-relevance context, improving both accuracy and LLM token optimization outcomes. - Cost-aware orchestration and governance:

The broader shift is toward embedding cost controls at the architecture level—across orchestration, memory, and model selection—rather than treating optimization as a post-processing step. Future systems will embed AI cost anomaly detection and budget controls directly into orchestration layers. This ensures cost is managed in real time, reducing the risk of runaway spend and long-term AI technical debt.

What is the implementation roadmap for enterprise LLM cost optimization?

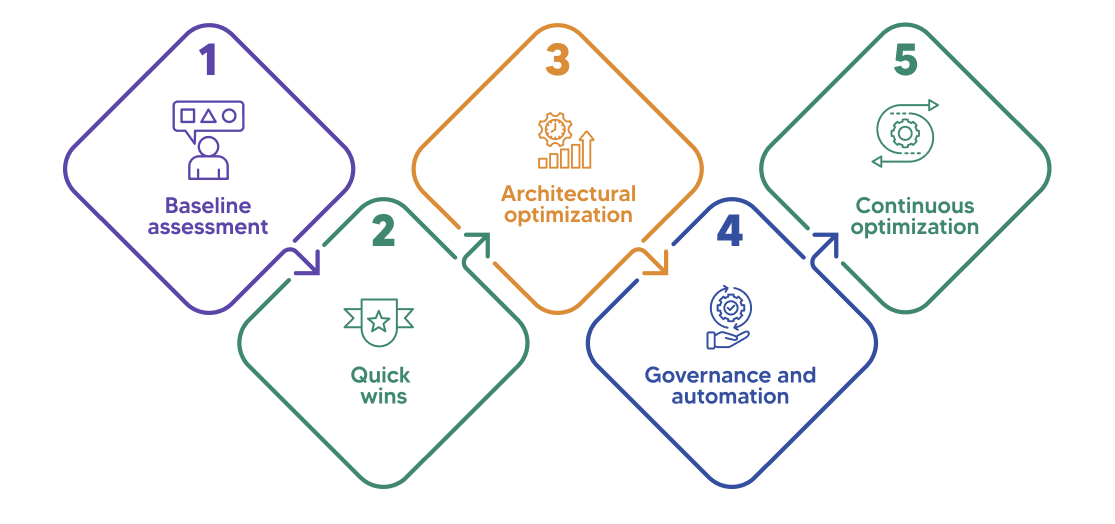

Phase 1: Baseline assessment

Before optimization, establish what you are actually spending and where. Measure current LLM usage patterns, cost per use case, infrastructure utilization, and token consumption metrics at the query level. Without a baseline, optimization efforts cannot be validated and cost anomalies cannot be identified. Define KPIs at this stage: cost per query, tokens per query, cache hit rate, model usage mix, failure and retry rates, and GPU utilization.

Phase 2: Quick wins

Immediate opportunities that deliver 10 to 30% cost savings within weeks include prompt optimization to strip unnecessary tokens, output length controls, implementation of provider-native prompt caching, and model downgrading for simple or repetitive tasks. These require low engineering effort and produce measurable results on the next billing cycle.

Phase 3: Architectural optimization

This phase addresses structural inefficiency. Implement RAG pipelines with tight retrieval constraints and token pruning. Introduce model routing with complexity classification. Optimize infrastructure autoscaling based on demand signals rather than time-based schedules. Use spot instances and batch APIs for eligible workloads. Redesign data pipelines to eliminate context reloading in agentic workflows.

Phase 4: Governance and automation

Deploy cost monitoring dashboards that alert on spend anomalies, not just errors. Implement per-user and per-agent budget controls to prevent runaway spend. Automate scaling policies. Integrate AI FinOps practices, treating inference cost as a financial metric with ownership, accountability, and reporting cadence equivalent to cloud infrastructure spend.

Phase 5: Continuous optimization

Run regular cost audits against performance benchmarks. Test model updates and fine-tuned alternatives against cost-quality tradeoffs on an ongoing basis. The LLM pricing landscape is changing rapidly, with prices dropping approximately 80% year-over-year. Re-run cost models quarterly to capture repricing. What was the most cost-effective architecture six months ago may not be today.

Simplify AI ops: How Kellton helps enterprises optimize LLM costs

LLM adoption is accelerating, but without structured LLM cost optimization, rapid scale quickly becomes financial risk. Enterprises that embed cost controls into their AI Ops strategy 2026—across routing, caching, and architecture—will achieve sustainable enterprise LLM efficiency while avoiding the long-term burden of AI technical debt.

Kellton works with enterprises to build cost-aware AI architectures from the ground up. From LLM token optimization to audits and model routing implementation to AI governance frameworks, Kellton's AI engineering experts reduce LLM API burn rates without trading off performance. Whether you are dealing with runaway inference costs or scaling a deployment that was never optimized, Kellton brings the AI ops expertise to make it financially sustainable.

Ready to reduce your LLM infrastructure cost?

Frequently asked questions on LLM Cost Optimization

Q1. What are the biggest cost drivers in LLM deployments?

Token usage, particularly output tokens priced 3 to 8 times higher than input, is the primary driver. Infrastructure overhead adds 20 to 40% on top of API spend. Architectural waste from retry loops, context reloading in agentic systems, and over-retrieval in RAG pipelines compounds both.

Q2. How can enterprises reduce LLM costs quickly?

The fastest wins are prompt optimization, provider-native prompt caching (50 to 90% off cached inputs), output length controls, and routing simple queries to smaller models. These can be implemented in days with no architectural rework and typically deliver 10 to 30% cost reduction within weeks.

Q3. Is RAG always cost-effective?

RAG reduces token costs by grounding responses in retrieved context rather than relying on model memory, but poorly tuned RAG pipelines that pass 4 to 8 full documents per query can significantly inflate input token counts.

Q4. How important is governance in LLM token optimization?

Without per-user budget controls, retry guardrails, and spend anomaly alerts, a single misconfigured agent or uncapped retry loop can erase weeks of optimization gains. Governance converts cost optimization from a one-time project into a sustainable operational capability.

Q5. Can open-source models reduce costs?

Yes, for high-volume and stable workloads where GPU utilization can be maintained above 50%. Self-hosted models can reduce costs by up to 78% at scale. However, they introduce infrastructure management, ML engineering for quantization and optimization, and compliance overhead.